Often you will get a “csv” file from someone to load into your Sql Server database. There are many ways to do this including: SSIS, using the import tool from SSMS, etc. Now there is another way using Import-DbaCsv from dbatools.

Read about what dbatools is from my previous posting here: DBATOOLS.IO….A DBA timesaver/luxury

Here is a quick example of loading data into your database.

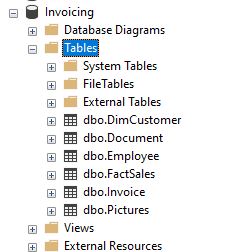

Looking in SSMS, I need to load a csv file into a staging table so it can be queried, or pushed to another table.

Here is what the file contents look like. A simple file with 4 columns of data about 4.4 million rows. Notice each field is wrapped in double quotes: ” “

To quickly load this into a new table, just use this command:

Import-DbaCsv -path c:\temp\billing_history2.csv -SqlInstance etlsqldb -Database Invoicing -Autocreatetable

-Path is the location of the file to import.

-SqlInstance is the server to load to.

-Database is which database

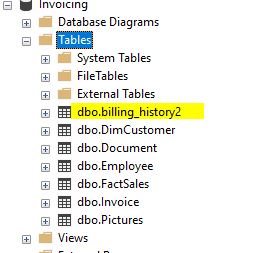

-AutoCreateTable is telling the command to create a new table. It uses the filename for the table name.

When the file is done importing you get a summary. This shows us it loaded 4.4 million rows in just over 47 seconds. Sure beats typing the information in!

Looking at the database, we can see the table got created.

Lets query the table. All of the data is there.

Looking at the table column types, we can see that it used the dreaded varchar(max) datatype. That is ok for this use since we will push this information to the correct tables later. It uses varchar(max) so there are not any length limits during the import or any issues putting a character into an INT field.

Another neat thing you can do is just import certain columns from the file into the database.

First I delete out all of the data from the table.

Now I use the following command to specify which columns I want to import.

$columns = @{

bill_id = ‘bill_id’

cur_bal = ‘cur_bal’}

Then we modify the command to use the -ColumnMap parameter and pass in the $Columns variable we created.

Import-DbaCsv -path c:\temp\billing_history2.csv -sqlinstance etlsqldb -database invoicing -ColumnMap $columns

After it finishes, we query the table and only the Bill_ID and Cur_bal columns have data.

If the table already exists and matches the CSV filename, you can leave off the -AutoCreatetable parameter and it will load the Billing_history2 table.

The only issue I sometimes have is if the file is not in the correct format. Often you will have a file like below without data enclosed in double quotes ” “.

To “fix” this file and enclose double quotes every field you can use this command: Import-Csv. I found this syntax on Stack Overflow a while back and do not remember which post it was, but I thank you whoever posted it.

import-csv C:\temp\bill_history.csv | export-csv C:\temp\billing_history2.csv -NoTypeInformation -Encoding UTF8

c:\temp\bill_history.csv is the original file that needs to be converted.

c:\temp\billing_history2.csv is the new file that will be created with double quotes.

After running the command above, your new file will be double quoted correctly.

Import-DbaCsv is another useful tool that dbatools provides. For more information about Import-DbaCsv, please follow this link: https://docs.dbatools.io/Import-DbaCsv

To learn more about dbatools, please follow this link: https://dbatools.io/